Problem Framing in Medical Imaging AI

Two teams can work on the “same” medical imaging task and end up building systems that behave very differently in the real world.

The final result depends on the sequence of decisions made throughout the project, often going all the way back to the earliest stages, where the problem itself is defined.

At that point, the main risk is not choosing the wrong model. It is moving forward with an under-defined problem, and jumping into execution too fast without ensuring that the project’s terms are explicit and shared across stakeholders.

So what does problem framing actually mean?

Problem framing is deciding what the model is allowed to learn, how it will be evaluated, and what its results can legitimately claim.

For example, it defines:

- what counts as a valid prediction

- what performance actually measures

- what conclusions can be drawn from the system

Clarity at this stage is not a formality. It is what makes the difference between a black-box model that performs and a system that can be trusted.

These decisions are also difficult to revisit later. Once development starts, they are already embedded in the dataset, the labels, and the evaluation setup.

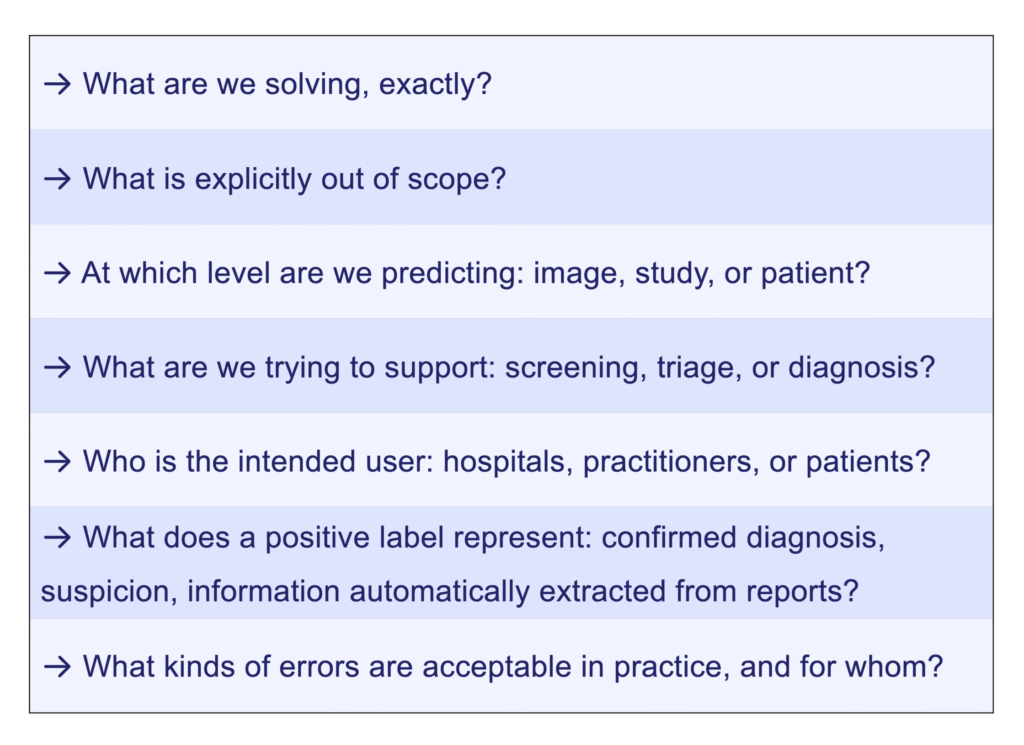

In practical terms, problem framing requires making explicit questions that are often left implicit:

Where does ambiguity come from?

Ambiguity does not come from complexity alone. It comes from decisions that remain implicit.

It typically lies in:

- Intended use Screening, triage, diagnosis, real-time application, surgery planning, or research do not imply the same constraints or expectations.

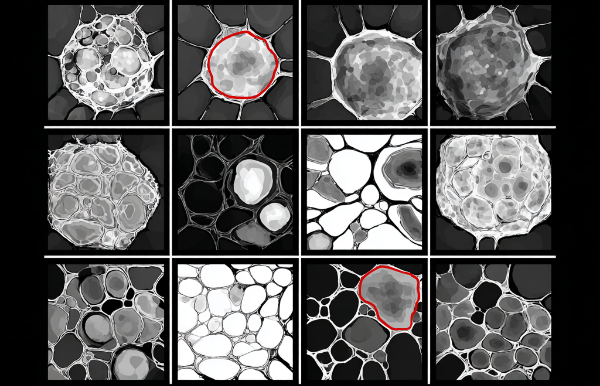

- Unit of analysis Image-level, study-level, or patient-level predictions lead to fundamentally different systems.

- Labels Diagnosis, suspicion, or report-derived labels do not carry the same meaning or intensity. If labels reflect uncertainty, is this captured explicitly (e.g. confidence, alternative labels)?

- Evaluation setup What is measured is not always what is claimed. Performance metrics often reflect the dataset structure more than real-world behavior.

What does a well-framed problem actually change?

A well-defined problem does not guarantee a good model. However, an under-defined problem almost guarantees misleading results.

The impact of problem framing extends well beyond technical performance. It shapes the entire project, from the first team decisions to the final regulatory submission.

For teams and stakeholders

When the problem is explicit, everyone involved — data scientists, clinicians, product managers, and regulatory teams — works from the same definitions. What is being predicted, at which level, and for which use case is no longer a matter of interpretation. This reduces misalignment, accelerates decision-making, and makes the project easier to manage across disciplines.

For development

A clear problem framing defines the system’s boundaries, what the model is designed to do, and explicitly what it is not designed to do. This makes the development roadmap more precise, the technical choices more justifiable, and evolution perspectives better organized. Scope decisions are documented from the start, not reconstructed after the fact.

For evaluation

When the problem is well-framed, performance coverage and limitations become explicit. Metrics represent real-world constraints, not just dataset structure. Benchmarking choices become grounded and reproducible. The system can be evaluated consistently over time, and its evolution can be tracked meaningfully.

For regulatory and clinical trust

A well-defined system whose behavior is scoped can be defended in front of regulators, clinical users, patients, and all decision-makers.

Problem framing is not just a technical foundation. It is the condition under which a system’s behavior can be understood, questioned, and defended by the people who rely on it.

Conclusion

In medical imaging, clarity does not come from better models. It comes from making the problem explicit enough that the results can be interpreted, trusted, and acted upon.

Problem framing is the first step towards that clarity, and towards a traceable and trustworthy system.

◆ I work with medical imaging AI teams on questions related to data readiness, evaluation rigor, and technical defensibility across the AI R&D lifecycle.

Feel free to explore my Advisory page or to get in touch if these topics resonate with the challenges you are facing.